Bounding box annotation cost scales with object density, class complexity, required IoU thresholds, and QA depth. Loose boxes...

Read MoreBlog

How to Build a Knowledge Base That Actually Makes RAG Reliable

The most common failure mode in enterprise RAG programs is not the language model. It is the knowledge...

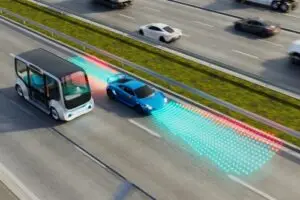

Read MoreHow Construction Zone Data Gaps Cause Autonomous Vehicle Failures

Construction zones are among the most demanding scenarios for autonomous vehicle perception systems. The environment changes faster than...

Read MoreWhy Your GenAI Deployment Is Only as Good as the Data Behind

I’ve talked to many enterprise teams that are frustrated with their GenAI programs. The model they selected is...

Read MoreHuman Feedback Training Data Services: Where RLHF Ends and What Comes Next

Human feedback training data services are specialized data pipelines that collect, structure, and quality-control the human preference signals...

Read MoreAI Data Operations: The Operating Model Behind Every Scaled LLM Program

Most Gen AI programs fail between the pilot and production, and the reason is almost always the data...

Read MoreAnnotation for Night Driving: What AI Perception Models Need to See in

A perception model trained on daytime data does not automatically extend to nighttime conditions. The visual characteristics of...

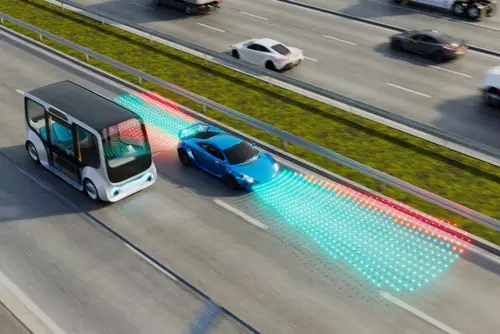

Read MoreV2X Communication and the Data It Needs to Train AI Safety Systems

A single autonomous vehicle perceiving the world through its own sensors has hard limits on what it can...

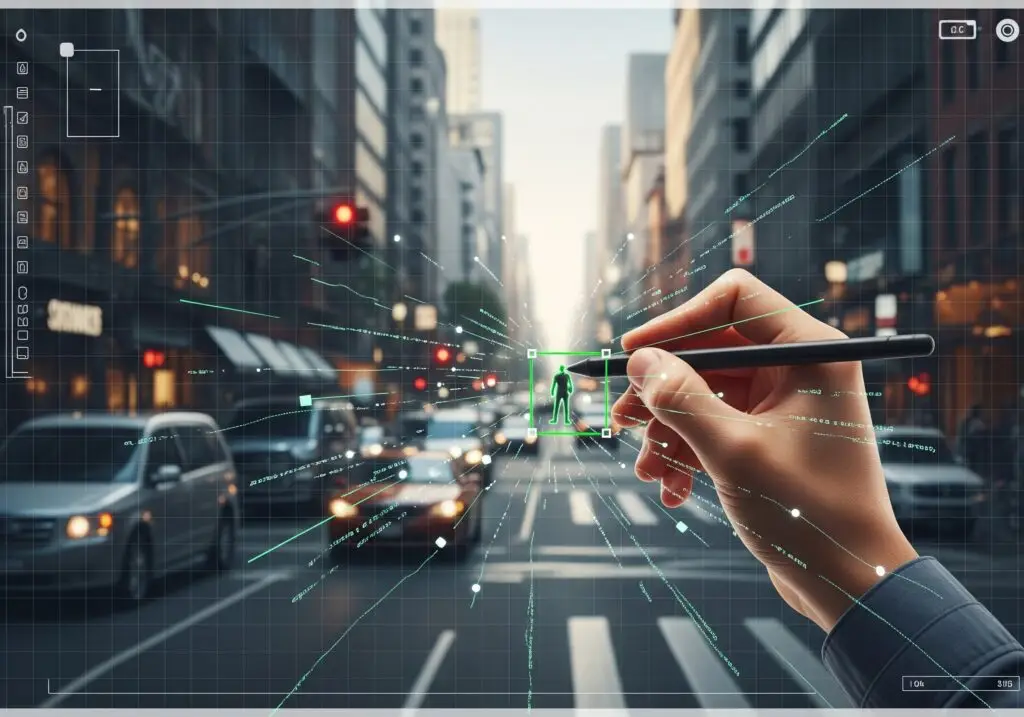

Read MoreWhy Annotation Taxonomy Design Is the Most Overlooked Step in Any AI

Every AI program picks a model architecture, a training framework, and a dataset size. Very few spend serious...

Read MoreWhat Is Occupancy Grid Mapping and Why Autonomous Vehicles Need It

Object detection has been the dominant paradigm for autonomous vehicle perception. A model identifies a car, a pedestrian,...

Read MoreHow to Write Effective Annotation Guidelines That Annotators Actually Follow

Most annotation quality problems start with the guidelines, not the annotators. When agreement scores drop, the instinct is...

Read MoreRed Teaming for GenAI: How Adversarial Data Makes Models Safer

A generative AI model does not reveal its failure modes in normal operation. Standard evaluation benchmarks measure what...

Read More